Levels of Evidence

The purpose of this page is to create a reference to describe our methodology for assigning levels of evidence to regimens.

Important note: Our intent is not to provide clinical decision support. Rather, our goal is to faithfully reproduce findings of clinical trials. Efficacy and toxicity information, in particular, is sometimes presented by authors in a confusing or ambivalent manner. As such, we try to illustrate ambiguities when they happen, and take no responsibility for your decision to choose a particular treatment regimen. Please read our disclaimer for further information.

Note for colorblind users: We are aware that the color scales we use are not colorblind-safe and are not compliant with Section 508. We have no current plans to change the overall coloring schema but welcome feedback on this particular point.

See the sections below for a discussion of the various metrics we use. Feedback is welcome!

Note that the structure and content of HemOnc.org are constantly undergoing evolution, such that information that you read here might be outdated. This page was last reviewed and updated in August 2023.

Evidence

Generally, a regimen should be evaluated in a randomized fashion with an adequate patient sample to be considered strong evidence. We have defined adequate as 20 or more patients per arm. Non-randomized studies and randomized studies with fewer than 20 patients per arm are considered to be moderate evidence. Finally, case reports, retrospective series, and non-randomized studies with fewer than 20 patients enrolled are considered to be weak evidence. Of course, there are finer gradations of the quality of evidence, such as whether an RCT was blinded, so this simplified scheme should be taken with a grain of salt.

Evidence is thus reported using one of three color-coded labels, using a sequential ColorBrewer scale:

| Strength of evidence | Wiki code |

|---|---|

| Strong evidence | style="background-color:#1a9851" |

| Moderate evidence | style="background-color:#91cf61" |

| Weak evidence | style="background-color:#ffffbe" |

Examples

A trial with strong evidence: R-CHOP for untreated follicular lymphoma

| Study | Evidence |

|---|---|

| Flinn et al. 2014 (BRIGHT) | Phase 3 |

A trial with moderate evidence: bortezomib & rituximab for untreated follicular lymphoma

| Study | Evidence |

|---|---|

| Evens et al. 2014 | Phase 2 |

A trial with weak evidence: cladribine for aggressive systemic mastocytosis

| Study | Evidence |

|---|---|

| Lim et al. 2009 | Retrospective |

Frequently asked questions

Q: What is the current status of evidence labeling on hemonc.org?

A: Nearly 100% of chemotherapy regimens and their variants now have a level of evidence label.

Q: Are all arms of a randomized trial labeled the same?

A: No, it depends on how many patients are in each arm of the trial. For arms that have at least 20 patients, the evidence is labeled as strong. For arms with fewer than 20, the evidence is labeled as moderate.

Q: Are non-randomized trials all labeled the same?

A: No, it depends on how many patients are in the trial. For trials that have at least 20 patients, the evidence is labeled as moderate. For trials with fewer than 20, the evidence is labeled as weak.

Q: Some retrospective analyses are very large, will they be labeled as moderate evidence?

A: No, currently we label all retrospective analyses as weak evidence, no matter how large. Although we are major proponents of the secondary use of data, including automated methods of EHR data extraction, there is currently too high of a level of unknown biases and confounding to label regimens derived from retrospective data other than as weak evidence. Likewise, a trial that reports on a comparison to historic or contemporary controls not enrolled in that trial will be considered non-randomized.

Q: How can I tell which arm of an RCT was the experimental arm?

A: Currently, we do not distinguish between experimental and control arms. However, starting with phase III trials, we will begin labeling the arms using either of the following:

- Phase III (E): an experimental arm of the RCT

- Phase III (C): the control arm of the RCT

Efficacy

Defined generally, efficacy is the presence of a positive effect on the study population. Conversely, lack of efficacy is the absence of an expected positive effect, or the failure to achieve expected outcomes in adequate numbers of patients. Efficacy can be reported ranging from a weak surrogate measure (e.g., response rate) to a direct measure of overall survival; see our responses to treatment page for more information. Currently, we are focusing on adding information on statistical comparative efficacy for randomized trials, and primary endpoints for non-randomized trials with >20 participants. Many non-randomized trials report efficacy compared to historical controls. However, in the rapidly developing field of oncology, this approach is rife with bias and as such we do not report on comparison to historical controls. Future work will involve reporting on effect sizes as well as statistical comparative efficacy (see Miksad et al. 2007 for a discussion of why this is important).

Non-comparative efficacy

Non-comparative efficacy is reported using a multi-hue sequential Color Brewer scale, where the lighter the color the higher the response rate. At this time, if a non-comparative trial reports a time interval as the primary endpoint (e.g., PFS), we will still report a response rate, given that time intervals can't be interpreted except as compared to historic controls (see above):

| Response rate | Wiki code |

|---|---|

| 0 to 12.5% | style="background-color:#6e016b; color:white" |

| 12.5 to 25% | style="background-color:#88419d; color:white" |

| 25 to 37.5% | style="background-color:#8c6bb1" |

| 37.5 to 50% | style="background-color:#8c96c6" |

| 50 to 62.5% | style="background-color:#9ebcda" |

| 62.5 to 75% | style="background-color:#bfd3e6" |

| 75 to 87.5% | style="background-color:#e0ecf4" |

| 87.5 to 100% | style="background-color:#f7fcfd" |

Comparative efficacy

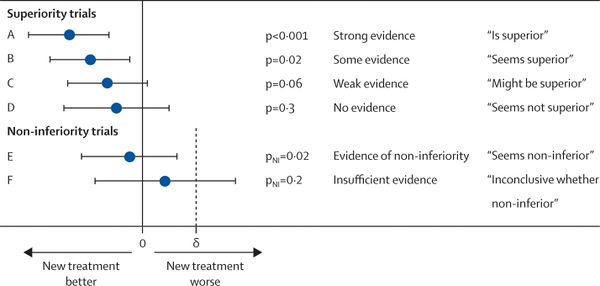

Comparative efficacy is summarized using a seven-level red-yellow-green divergent ColorBrewer scale, along with a narrative description. Hue is a function of p-value for trials that do not report a confidence interval. This is typical for trials published prior to 2000. For trials that do report a confidence interval, we use the bound that is closest to 1 to assign hue. The assignment is based upon the most commonly used 95% CI; however, an increasing number of trials are using alternative confidence intervals and our assignment of hue is still in flux for these. Note: re-assigning hue based on confidence intervals is a work-in-progress.

Superior and inferior findings

Note: values are rounded to the hundredth place.

| Comparative efficacy | p-value | 95% CI | Narrative description | Wiki code |

|---|---|---|---|---|

| Superiority | Less than or equal to 0.01 | Upper bound (UB) is less than or equal to 0.95 | Superior endpoint | style="background-color:#1a9850" |

| Superiority | Greater than 0.01 up to and including 0.05 | UB is greater than 0.95 up to and including 1.00 | Seems to have superior endpoint | style="background-color:#91cf60" |

| Superiority | Greater than 0.05 up to and including 0.10 | UB is greater than 1.00 up to and including 1.05 | Might have superior endpoint | style="background-color:#d9ef8b" |

| Inferiority | Greater than 0.05 up to and including 0.10 | Lower bound (LB) is at least 0.952 and less than 1.00 | Might have inferior endpoint | style="background-color:#fee08b" |

| Inferiority | Greater than 0.01 up to and including 0.05 | LB is at least 1.00 and less than 1.053 | Seems to have inferior endpoint | style="background-color:#fc8d59" |

| Inferiority | Less than or equal to 0.01 | LB is at least 1.053 | Inferior endpoint | style="background-color:#d73027" |

Negative and non-inferior findings

The distinction between a negative superiority trial and a positive non-inferiority or equivalence study is crucial. In a negative superiority trial, a treatment has been hypothesized to be better than another, but in the end the null hypothesis was not rejected. There is still a distinct possibility that one treatment is superior to the other (type II error), but the signal is not observed due to underpowering issues, excess crossover, attrition such that the intention-to-treat population is small, or obfuscation of a subgroup by the larger population. Non-inferiority trials use a one-sided test to determine whether a new intervention is no worse than a standard intervention. Equivalence trials have a similar design but use a two-sided test, allowing for the possibility that the new intervention is no better than the standard one. These designs require much greater numbers of participants, and are often used to evaluate a new treatment that is likely to have comparable efficacy but has an improved (or different) toxicity profile. It should be noted that non-inferiority designs can be complicated and inconclusive results may be subject to "spin" as described in this article.

To distinguish "negative" superiority from "positive" non-inferiority, we use intensity as well as hue:

| Type of outcome | Narrative description | Wiki code |

|---|---|---|

| A "negative" superiority trial | Did not meet primary endpoint of XXX | style="background-color:#ffffbf" |

| A "negative" non-inferiority trial | Inconclusive whether non-inferior endpoint | style="background-color:#ffffbf" |

| A "negative" equivalence trial | Inconclusive whether equivalent endpoint | style="background-color:#ffffbf" |

| A "positive" non-inferiority trial | Non-inferior endpoint | style="background-color:#eeee01" |

| A "positive" equivalence trial | Equivalent endpoint | style="background-color:#eeee01" |

Magnitude of effect

For time-based outcomes such as overall survival (OS) and progression-free survival (PFS), we intend to report the effect size and an estimate of the effect ratio. Our preferred effect size is the median - that is, the time interval at which 50% of the patients have experienced the event. However, some papers do not report the median and instead report a fixed-interval endpoint, such as the number of patients alive at 12 months. In these cases, we report the endpoint with the shorthand of the number of months at which point the effect is measured. For example, overall survival as 12 months is OS12, progression-free survival at 3 years is PFS36, and so forth. If the median time to the endpoint has not been reached, we use the abbreviation NR (not reached).

Our preferred estimate of the effect ratio is the hazard ratio (HR) with 95% confidence interval (CI). We adopt the convention that an HR of less than 1 corresponds to a reduced risk of the event. Sometimes in the literature, the hazard ratio is reported inversely, where an HR of greater than 1 corresponds to a reduced risk of the event. In this case, we will convert the HR and CI by taking their reciprocal.

Adding HR and CI to the trials reported on HemOnc.org remains a work-in-progress. Given that the ratio for an experimental and a control arm are reciprocals of each other, we will first plan to only report one HR and CI for each comparison pair. By default, this will be reported for the experimental arm(s) of studies. If a study is "negative" such that the experimental arm will not be included on HemOnc.org, we will instead report the HR and CI for the control arm.

More details

What we are really interested in is whether efficacy findings from a clinical trial will work for our patient. As such, we as a medical community have historically relied on the cutoff of p=0.05 to accept whether or not a finding is significant and true. Of course, this means that approximately 1 in 20 reportedly "true" findings are in fact falsely positive. This "holy grail" cutoff has led to significant publication bias which is well summarized by Dr. John Ioannidis in his seminal 2005 paper "Why Most Published Research Findings Are False." One potential solution, which we have adopted, is to report comparative efficacy "in plain English" as shown in the graphic below (link to original 2009 article).

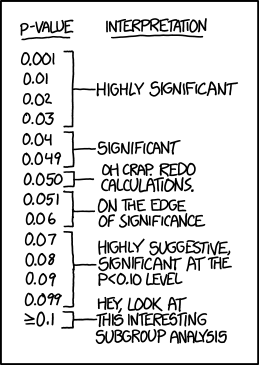

Here is another way of considering P-values, only just a bit tongue-in-cheek from XKCD.

Examples

1. A treatment regimen with superior efficacy: BR for untreated follicular lymphoma

| Study | Evidence | Comparator | Comparative Efficacy |

|---|---|---|---|

| Rummel et al. 2013 (StiL NHL1) | Phase 3 (E-switch-ic) | R-CHOP | Superior PFS |

2. A treatment regimen which failed to demonstrate a difference in primary endpoint: gemcitabine for metastatic pancreatic cancer

| Study | Evidence | Comparator | Comparative Efficacy |

|---|---|---|---|

| Hong et al. 2013 (SMC 2008-07-065) | Randomized Phase 2 (C) | Gemcitabine & Simvastatin | Did not meet primary endpoint of TTP |

3. A treatment regimen that is non-inferior to its comparator: capecitabine & bevacizumab for metastatic breast cancer

| Study | Evidence | Comparator | Comparative Efficacy |

|---|---|---|---|

| Zielinski et al. 2016 (TURANDOT) | Phase 3 (E-switch-ic) | Paclitaxel & Bevacizumab | Non-inferior OS |

4. A treatment regimen with inferior efficacy: dexamethasone for relapsed/refractory multiple myeloma

| Study | Evidence | Comparator | Comparative Efficacy |

|---|---|---|---|

| Rajkumar et al. 2008 | Phase 3 (C) | TD | Inferior TTP |

Frequently asked questions

Q: What is the current status of efficacy labeling on hemonc.org?

A: All phase 3 trials and most randomized phase 2 trials are now labeled for efficacy. Future work includes labeling non-randomized trials with overall response rates (ORR).

Q: How do you choose to label efficacy when multiple outcomes are reported?

A: Often, a trial will report on multiple outcomes, such as overall response rate, progression-free survival, and overall survival. We generally look to the PRIMARY endpoint, as defined in the published methods. If the PRIMARY endpoint is negative but the trial reports a positive secondary more surrogate finding, we do NOT label this (example - primary endpoint was OS, which was not shown, but the investigational arm did have better PFS). If a secondary endpoint shows differential efficacy and is LESS "surrogate" than the primary endpoint (see below), we will also label by that endpoint.

Q: What about the situation where there is no clear primary endpoint?

A: This situation is common in trials published prior to 1990, and sometimes thereafter. We try to determine from the manuscript, especially the statistical analysis section, what best approximates the primary endpoint. For example, if only one endpoint is discussed in the abstract and there is no other obvious indication of what might be a primary endpoint, we will consider that as the primary endpoint. In some cases, it is impossible to determine and we have not quite settled on how best to label these trials.

Q: How do you handle changes in efficacy when trial results are updated?

A: Many trials are published at the time of preliminary findings related to the primary endpoint, and have subsequent publications as the results mature. At this time, most of these updates are added to the bibliography section of the regimen. If the strength of the efficacy assertion changes, or if a less surrogate endpoint becomes significant, we will update the efficacy label with a footnote to denote that it is an updated efficacy.

Q: Do you account for effect size?

A: Not currently, although this is certainly something we may want to include in the future, given that a highly statistically significant but extremely small effect size could be considered clinically meaningless. Likewise, regimens that have a borderline p-value due to power issues but have a large effect size, such as a 6-month improvement in overall survival in stage IV cancer, may be highly clinically meaningful.

Q: Do you have a hierarchy of surrogacy?

A: Yes, we use a three-level hierarchy to determine the strength of an outcome measure: strong endpoints, intermediate surrogate endpoints, and weak surrogate endpoints. Please see the dedicated response to treatment page for more details. Note that this hierarchy is NOT used to inform the coloration of the efficacy label, at this time.

Q: What about exceptional responders?

A: It is increasingly recognized, especially with newer therapies such as immunotherapy, that some patients may experience a remarkable response to a drug that otherwise appears to lack efficacy in the population. These patients are usually referred to as "exceptional responders" and may provide significant insights into rational treatment selection a.k.a., precision medicine. At this time we do not make a particular effort to identify exceptional responders, nor do we consider a regimen for inclusion in HemOnc.org if the manuscript states that it generally lacks efficacy.

Q: Do you consider health-related quality of life (HRQoL) measures in efficacy?

A: Very few RCTs report on QoL measures, but when they do, we plan to include this as a measure of toxicity, not efficacy.

Toxicity

Defined generally, toxicity is the presence or absence of a negative effect (harm) on the study population. This is often also referred to as safety. As with efficacy, we only report comparative toxicity. Much of the focus on HemOnc.org has been on efficacy, due in large part to the fact that, while standardized, toxicity reporting tends to be limited in granularity and interpretability. We are currently taking two approaches to toxicity:

Toxicity information from the primary literature

Due to less granular and more subjective reporting in the primary literature, toxicity is currently reported using one of three labels:

| Decreased toxicity | Equivalent toxicity | Increased toxicity |

Example

A treatment regimen with increased toxicity: R-CHOP for untreated follicular lymphoma

| Study | Evidence | Comparator | Comparative Efficacy | Comparative Toxicity |

|---|---|---|---|---|

| Hiddemann et al. 2005 | Phase 3 | CHOP | Seems to have superior OS | Increased toxicity |

Toxicity information from companion or post hoc analyses

If a dedicated analysis of toxicity is performed, we will report using the standard coloring used above for efficacy. In particular, we consider health-related quality of life (HRQoL) analyses to be a rigorous surrogate of toxicity, and are actively adding these to the site.

Example

A treatment regimen with worse HRQoL: placebo for metastatic castrate-resistant prostate cancer

| Study | Evidence | Comparator | Comparative Efficacy | Comparative Toxicity |

|---|---|---|---|---|

| Beer et al. 2014 (PREVAIL) | Phase 3 | Enzalutamide | Inferior OS | Worse HRQoL |

Frequently asked questions

Q: What is the current status of toxicity labeling on hemonc.org?

A: A few regimens are currently labeled for toxicity; we are focusing current efforts on labeling efficacy.

Q: Are you basing the label on the reported CTCAE measures?

A: CTCAE measures are extremely valuable in that they are structured and thus reproducible. However, it is often hard to compare them directly. For example, if one regimen has grade 4 lab-based toxicity and the other has grade 2 gastrointestinal toxicity, which is the more toxic? In general, we plan to use the authors' interpretation of overall toxicity and tolerability when labeling from primary literature - or better yet, prospectively-gathered quality-of-life data (see above).

Q: Do you plan to incorporate patient-reported outcomes?

A: As shown in numerous publications, patient reports of toxicity are more accurate than clinician assessments. However, they have not until recently been standardized. Now that the PRO-CTCAE is available, we expect to see more of these in the future and will incorporate them into the toxicity assessment.

Q: Do you plan to incorporate financial toxicity?

A: This is an incredibly important topic, and the reader is encouraged to read the publications of Dr. Yousuf Zafar for some excellent background. For now, we are focused primarily on clinical measures but are open to adding financial toxicity information.